Here we go again: Another fight for platform dominance. This time it’s of course about winning in the AI race.

Anthropic’s launch of Claude Managed Agents this week is the clearest signal yet that the AI market has entered a new phase. This is not just another model release. It is Anthropic moving from selling intelligence to selling execution infrastructure: secure sandboxing, long-running sessions, orchestration, and governance, all tied together with Anthropic’s existing products such as Claude Code.

That is platform behavior, not model-vendor behavior.

The IT industry has always been shaped by large platforms that competed fiercely. Wintel vs. Mac, iOS vs. Android, Playstation vs. Xbox vs. Nintendo Switch, AWS vs. GCP vs. Azure, the list goes on.

Silicon Valley is obsessed with platforms, for a simple reason: The most valuable companies in the world are all platform companies. Winning a platform race can be extremely lucrative, but it’s also not cheap. For example, there’s a reason why Mark Zuckerberg spent dozens of billions hoping to win the VR platform race, so far without much to show for it.

By far the most important platform race is the one in AI, and in particular the race for control of the enterprise AI stack.

From model war to platform war

For the last couple of years, the AI market mostly looked like a model war: Better reasoning, better coding, better multimodality. Better benchmarks. But that was always an incomplete picture.

Platform wars begin when the leading players stop shipping isolated components and start assembling the full stack above them. That is what is happening now. Anthropic’s move into managed runtime is one clear proof point. OpenAI’s Frontier vision is another: it explicitly frames the future as shared context, onboarding, feedback loops, permissions, and AI coworkers operating across real business systems.

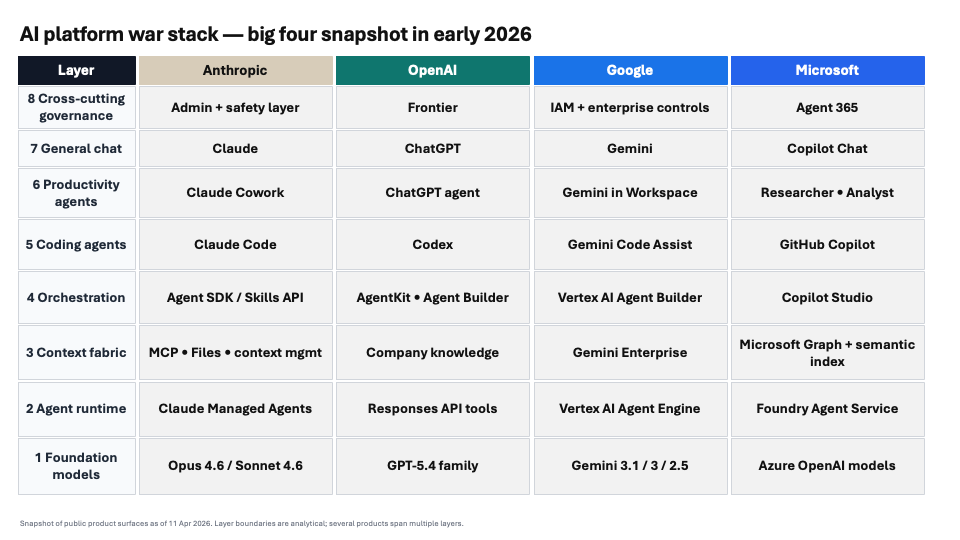

The stack is now visible. At the bottom sit the foundation models: LLMs, code, video, image generation. On top of that comes the agent runtime: hosted execution, sandboxes, tool use, sessions, browser or computer access, and long-running jobs.

Above that is context: enterprise search, connectors, memory, permissions-aware retrieval, and company knowledge. Then comes the builder/orchestration layer: workflow builders, reusable skills, agent coordination, evaluation, and deployment.

On top of that sit the application surfaces: coding agents, productivity agents, and finally the general-purpose chat interface that acts as the front door. And sitting across all of it is the layer most people still underestimate: governance — identity, permissions, tracing, audit, security, and control.

Right now there are four serious players competing for the entire stack: OpenAI, Anthropic, Google, and Microsoft. Of course many others offer bits and pieces in the stack, sometimes even leading in a particular area.

But if history is any guide, the really important race is that for dominance of the entire platform stack. And the big four are the only serious contenders for this.

What the big four are offering

Anthropic is probably the company that made the biggest transition in the last few months. Until recently, Anthropic was a company with very strong frontier models, a premium chatbot, and an increasingly strong coding product. With the invention of the MCP integration protocol Anthropic for the first time clearly showed platform ambitions.

Then came massively extended versions of Claude Code as a universal agent platform, Claude Cowork as a productivity tool, and now an agent execution environment. Anthropic still has gaping holes in its lineup, such as lacking models for image and video generation, but it has focused remarkably well on what enterprises really value.

OpenAI on the other hand may still have the clearest articulation of what the end state looks like. Frontier is not pitched as a collection of tools, but as an enterprise platform for building, deploying, and managing AI agents with shared context and explicit permissions and boundaries.

While it has fallen behind Anthropic recently in the coding and agent areas, OpenAI is filling in the stack quickly. Codex is now a serious multi-agent surface for parallel, long-running work, expanding beyond coding. ChatGPT agent combines several operating modes and connectors. OpenAI’s runtime story may be less clearly implemented than Anthropic’s, but strategically it is obvious where this is going. OpenAI is trying to become the operating system for AI coworkers as well, and with a broader model capability footprint than Anthropic.

Of all four players, Google may have the broadest technical surface. On the cloud side, Vertex already offers a real agent runtime environment and many other enterprise components. On top of that, Google is building a serious context and distribution layer, benefitting of course from its strong Google Workspace product. It has an agent and workflow builder and a range of capable (but not yet very popular) coding tools as well. Gemini is of course a strong chatbot product, benefitting from the quality of its models, including the very popular image and video generation tools.

Google’s weakness has historically been a product strategy that can feel quite fragmented. It’s the same in AI: The perceived quality of Google AI products ranges from mindblowing to “what were they thinking when they released that?” And deep integration between the pieces seems to be a challenge as well.

Finally, Microsoft may have the strongest enterprise endgame, even if it is not always obvious. Its advantage is not the model layer, where the company combines some specialized models of its own with the big models from OpenAI and more recently Anthropic. The real strength is the fact that Microsoft already owns so much of the environment where work happens in enterprises: Microsoft Office/365, Teams, GitHub, and so on. Copilot is certainly not perceived in the market as the strongest chatbot product, but it is still ubiquitous in many companies.

Microsoft is also building the runtime and builder layers aggressively with Foundry, Copilot Studio and various Azure products. However, navigating the complexity of Microsoft’s offerings is challenging.

What they are fighting over

The surface-level interpretation is that all of these vendors want a bigger share of wallet from B2B customers. That’s true, but incomplete. What they are really fighting over is being the default environment in which AI work happens.

Being the default platform for work can be very lucrative. Just ask Bill Gates.

In prior platform wars, the winner usually owned the point of highest control and value creation. In the PC era it was the standard stack of CPU and OS, which then allowed Microsoft to dominate productivity apps as well. In mobile it was the operating system and app store. In cloud it was the control plane and service breadth.

We don’t know yet what this “choke point” will be in AI. For a while, it seemed like model capabilities would decide the race, but with several large players now playing on very similar levels, this doesn’t seem to be true anymore. For B2B environments, it might be a combination of runtime, context, and governance, closely integrated with the best models.

OpenAI and Anthropic already train their latest models in close connection with their agent harnesses. This is a clear sign for vertical integration that is not just motivated by business considerations, but also by technical performance.

The winning platform will be the one that makes it easiest to deploy agents that are trusted, connected, and embedded in real workflows. This is also why I think governance deserves to be treated as a first-class platform layer. All four players talk about this, with Microsoft probably having the most experience. Over time, this may become the least glamorous but most important part of the platform war.

What about other players?

Of course it’s not just the big four mentioned here that want to be the owner of a platform that runs your agents. Various other players are in the race as well, but they seem clearly weaker by comparison because they don’t own enough of the stack.

AWS has built strong infrastructure, but has no meaningful footprint in models and UIs. Salesforce is pushing its agent infrastructure as an extension of its core product line-up, but that’s not terribly appealing to non-Salesforce customers. Things look similar for SAP, Oracle and other incumbents.

Then there are of course a bunch of smaller, specialized players that have a best-of-breed product in a particular layer of the stack.

That’s a tough spot to be in. Just look at how Cursor went from everybody’s favorite coding tool to something people barely mention anymore, in a matter of months. Competing against a reasonably capable tool provided by a platform owner is difficult.

Will interfaces be open or closed?

There is one interesting difference from earlier platform wars: The underlying plumbing and integration points in the AI agent stack may become standardized and open even while the platforms compete on a UX, governance and model level.

Historically, platforms tried to control their inner workings closely and define precisely where 3rd parties are allowed to play. Apple has to approve your app for the App Store. Microsoft tells you which exact APIs your Windows application can use. The cloud providers try to lure you into using their bundled services instead of rolling your own from open source components. It’s all about control and customer lock-in.

In AI, we have seen an early proliferation of open standards. MCP allows the connection of external tools and data, and all major players are supporting it. Google introduced its Agent2Agent protocol as an open standard. And it is quite easy already to transfer skills between the platforms. I often develop skills in Codex (most capable coding) and then run them in Claude or OpenClaw. This is surprisingly easy.

But will it stay that way? I doubt it. The big winners in previous platform wars were those that used open standards to win market share, but at the same time captured value with their own proprietary solutions. Sometimes a fully open stack ended up winning (such as LAMP for websites), but that’s rare.

What this means for startups

For startups, the easy era of “just build a nice wrapper on top of the frontier models” or “provide an infrastructure tool that enables cool stuff behind the scenes” is clearly ending. The big platforms have very broad ambitions, and they have to have them, considering all the money that is at stake.

Many startups currently position themselves as what is generally called “platform complementors”: They fill a hole in the line-up of the large platforms where the big players don’t have a solid offering yet: Workflow engines, agent orchestrators, LLM security, memory platforms, and so on. These are all good ideas, and there is a clear market need. That’s a reason why some of these companies can grow very quickly.

But historically, it’s a dangerous position to be in. If you’re under 35 years old or so, you probably have never heard names like Borland, PowerBuilder, Lotus Development, Ashton Tate, or Sybase. These were all very successful software companies in the 80s that filled holes in the line-up of Microsoft and IBM. They largely disappeared when the platform owners encroached on their territory.

Of course you can build successful companies even in a world of large platforms. For example, Snowflake and Databricks became arguably the most important players in the data warehouse space, despite the big cloud providers having competing offerings. If you truly have a best-of-breed product that is wide enough not just to be a feature on somebody else’s roadmap, there is plenty of space.

Another successful strategy is to be the cross-platform vendor on a relevant layer. Oracle is the most famous example: It became the most important database vendor because it was able to run on all large computing platforms.

It’s likely that things will play out in similar ways in AI. The durable opportunities are either above the platforms or between them: vertical agents, proprietary workflow UX, domain-specific context, governance and compliance, specialized orchestration, and multi-platform abstractions. The dangerous place to be is the middle: thin applications or infrastructure features that depend on one platform, while owning none of the broader context themselves.

That is what platform wars do. They compress the value of undifferentiated layers and increase the value of control points.

Startups should study the history of platforms. How you position your company in this new phase of the market will determine whether you succeed or fail.

—

Book tip: “The Business of Platforms” by Michael Cusumano, Annabelle Gawer and David B. Yoffie